The Online Safety Bill: Censorship, Control and Wishful thinking.

A critique of the Online Safety Bill and the implications it has for British society.

Preface

The Online Safety Bill proposes to save the children; British children - your children - from the awful content posted to the internet by imposing safeguards, such as risk assessments on content and criminal liability on "tech executives" who fail to meet their "obligations".

There are also provisions to determine what is harmful, yet legal content and illegal content. Guidance on such content is supposed to be issued by Ofcom, the UK's Communications Regulator, but the internet is much more complex than anything Ofcom has ever regulated and they're chronically underfunded and understaffed, employing only 902 people. Herein lies the first question, how would Ofcom manage this extra work? Don't forget that when David Cameron was PM, he pledged 'With a Conservative government, Ofcom as we know it will cease to exist… Its remit will be restricted to its narrow technical and enforcement roles. It will no longer play a role in making policy. And the policy-making functions it has today will be transferred back fully to the Department for Culture, Media and Sport'. This implication by the Conservatives means that they did not want Ofcom making decisions about definitions or potentially legislative changing judgements; yet are now moving to give Ofcom that wiggle room as well as a brand new Ombudsman.

In a swipe at Encryption, legislators are also proposing to force platforms to "scan" messages/attachments for "harmful content" before being encrypted "end to end" and sent to their destination, otherwise they would face fines and other sanctions.

Ofcom, whilst billed as the Communications regulator, traditionally regulate TV & Radio platforms which are required to have a base in the United Kingdom making it possible to set guidelines. In the case of radio, I can speak from experience having worked in Radio Broadcast - first at Minster FM and associated sister stations, then in the setup of YO1 Radio. Ofcom regulating these stations worked because they were able to set policy guidelines, issue radio broadcast frequencies and handle complaints if any arose because the stations had a base in the United Kingdom.

The problem with Ofcom regulating "the internet" is that platforms might not have a base in the United Kingdom making it pretty much guaranteed that regulation will mean broad strokes rather than a reactionary or discretionary regulation overriding fundamental values Britons have had for hundreds of years. The result will be legislation striking at the heart of our culture and value system. For example, Whatsapp, owned by Meta, could theoretically operate entirely without infrastructure and business entities in the United Kingdom. It would require a huge shift in their operations, but it's not impossible and it is an idea Whatsapp have floated as well (bbc version, guardian version).

It wouldn't be the first time they have shut off service to a country either, having shut off service to Qatar, China, Iran and other countries who sought to undermine encryption - countries with populations much larger than the UK too, one might add.

Smaller operators, such as bloggers, could also easily migrate data, servers and domains to other geolocations. This legislation will therefore drive a stake straight through the heart of fundamental principles of Western democracy whilst also ensuring British business and startup culture is unprofitable. An example of this can be seen in Revolut, a "fintech" company which recently criticised the UK Tech scene for being overregulated, overpriced and unhelpful to those who seek to make big changes - complaints I can empathise with given the mountains of paperwork, regulation and legislation that killed Parentull.

If the absurdity of the above hasn't been enough to raise the hairs on back of your neck, perhaps the beliefs from campaign groups and legislators might. Hearing campaigners and legislators makes claims that legislation can negate science, history, human psychology and freedom of expression; however disdainful, immoral and unpopular, gives me chills. It shows a lack of understanding of the subject matter at hand, for instance ARPANET, a precursor to the "world wide web" was built as a military project to withstand nuclear bombs and keep the U.S military infrastructure connected. Today's "Internet" is much more capable with routing around filters, blocks, age verification etc as simple as flicking a switch - the result making any legislation redundant before its passed. Failure of legislators to understand this concept is concerning because it goes against very basic principles of science in todays world whilst neglecting history formed in part by British computer scientists.

I have previously floated comparisons of internet censorship to persecutions of Jews and Catholics in England. In particular, I have used priest holes where Catholic Priests used to hide as authorities sought them out for expressing "the wrong religion" as historical examples of how Human's will go to any lengths to express themselves - even if that means bribing craftsman to create secret rooms in their homes; similarly, the Massacre of Jews at Cliffords Tower shows legislation and high walls often mean nothing - many people wrongly believe higher walls mean safety, but they often also act as a prison. In that particular massacre, the Jewish community, being resented by the local population fled behind walls "under the kings protection" however neither the kings men, nor the high walls nor the threat of repercussions could save the Jews from a horrific fate. The same result will occur with this legislation, but will affect a much broader swath of people; of course, I don't mean that people will be jumping from castles but we have already seen mobs calling for people to be cancelled for "wrong think" and legitimising such behaviour in legislation is effectively the same as medieval persecution because it enables "harmful" to be interpreted in whichever way Ofcom deems appropriate or is popular at the time; or more likely; whichever way the baying mob demands it to be interpreted.

It is incredibly sad to see campaigners and legislators using the Online Safety Bill as a trojan horse to build high walls to torpedo freedom of expression. More alarming that legislators such as Dame Margaret Hodge that the public had a fundamental right to be offensive and this ruling is now being ignored to police "online" speech. In his opening remarks, Mr. Justice Julian Knowles quoted another ruling, "R v Central Independent Television plc [1994] Fam 192, 202-203, Hoffmann LJ said that: “… a freedom which is restricted to what judges think to be responsible or in the public interest is no freedom. Freedom means the right to publish things which government and judges, however well motivated, think should not be published. It means the right to say things which ‘right-thinking people’ regard as dangerous or irresponsible. This freedom is subject only to clearly defined exceptions laid down by common law or statute." concluding the vast ruling in Harry Miller v Humberside Police, Justice Knowles stated "There was not a shred of evidence that the Claimant was at risk of committing a criminal offence. The effect of the police turning up at his place of work because of his political opinions must not be underestimated. To do so would be to undervalue a cardinal democratic freedom. In this country we have never had a Cheka, a Gestapo or a Stasi. We have never lived in an Orwellian society."

I don't expect my blog to change anything, though I do intend to share it with others for their views, but I wanted to write it to get my frustrations out as a Computer Scientist and tech professional but also as an extremely proud British national. For most of my life, I have viewed the United Kingdom as being a straight forward and common sense nation but over the last decade, I believe both of those values have slipped dramatically.

I'd concur with Lord Sumption when he says that "standards of parliamentary drafting have gone down over the last 100 years" but I believe the problem is worse than that. The West seems to be entering a period of fragility, where like Salvador Dali's Elephants, it is held ever further from reality on weaker and weaker stilts as the rest of the world looks on. No such better case could be made than the Online Safety Bill where "harmful content" is seen as the ultimate evil whilst Russia wages war in Ukraine, China harvests organs of, and commits genocide against Muslims, Japan kills dolphins and whales for sport, Slavery is still alive and well in Africa and the Middle East and women and children are generationally debt bonded to brick kiln masters in India.

At the time of writing, the Online Safety Bill is in the Committee Stage at the House of Lords so is likely to be subject to further amendments; such amendments will not be reflected in this blog. I am writing about the current state of the Online Safety Bill.

Think of the children

Listening to the debates and reading through the legislation, the Online Safety Bill gives the impression that campaigners and legislators are trying to do something positive about children accessing harmful content online. I say trying because we already know the legislation is doomed to failure despite the best efforts of legislators.

In the January 2023 debate in Parliament, multiple MPs stood up and recalled stories from their constituents about online grooming, suicide attempts and social media trends which gave them cause to support the Online Safety Bill.

Andrew Gwynne (Labour) intervened to state "My hon. Friend is making an important point. She might not be aware of it, but I recently raised in the House the case of my constituents, whose 11-year-old daughter was groomed on the music streaming platform Spotify and was able to upload explicit photographs of herself on that platform. Thankfully, her parents found out and made several complaints to Spotify, which did not immediately remove that content. Is that not why we need the ombudsman?"

It's good that Andrew is raising Child Sexual Exploitation and trying to fix issues, but lets examine the actual function of Spotify.

To start, there is no messaging function, at all. It just doesn't exist; so we can assume that if the 11-year-old was being groomed that her groomer already had access outside of Spotify. Isn't this a problem with parental oversight? What could a regulator or ombudsman do here that couldn't be fixed by parents ... parenting? If parents are not checking their children's phones to see who they are messaging and questioning "Hey, why are you messaging this stranger and why is s/he asking these bizarre questions?" then no amount of intervention from an ombudsman or platform is going to help. I'm sure there will be a swath of people who are uncomfortable of the idea of parents checking their children's phone quoting privacy; but I'd ask which is the least harmful - a parent checking a child's phone or a child being sexually exploited.

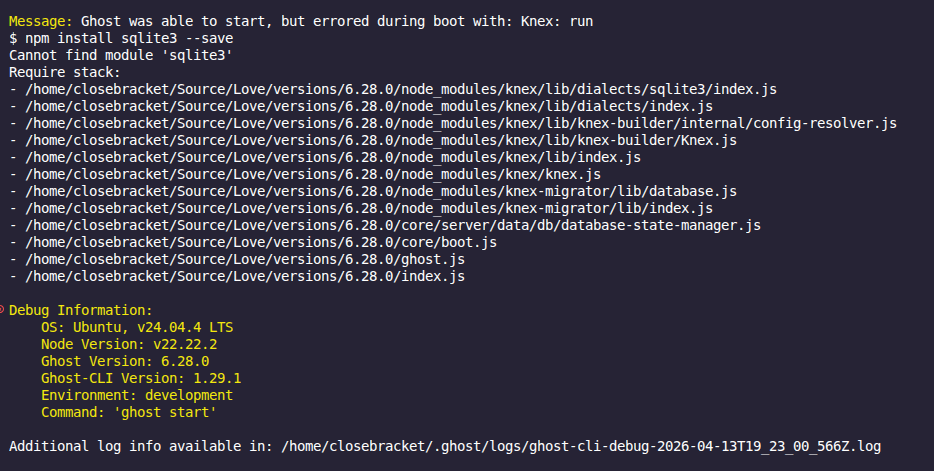

Next, the only way to upload photos is as album covers. Spotify resizes the photos so that they display as in Figure A. I'm sure it's possible to use tools such as wget to get the full size photo from Spotify's servers which, seems like a lot of effort if said groomer already had access to the child via another platform or even offline. Is it possible that Andrew just completely messed up what platform was used as he's not that tech savvy? I think it's about 50/50.

I concede, such a photo, no matter the platform, could be used as leverage over a young person; but we still come back to parental responsibility. It should not be up to the state to criminalise tech executives or act as an overarching nanny because parents were neglectful in checking their child's phone - especially a child as young as 11 who arguably should not have access to social media in the first place. There's a point to be made that most platforms require users to be 13.... at least until mummy and daddy give in to the nagging. I would also argue that it is not within the states power to undermine encryption or force platforms to do wholesale scanning of messages/content prior to encryption.

Of course, Spotify should have removed the explicit content (if it was uploaded there) but I feel the real issue is preventing such content in the first place by educating parents who presumably would prefer children shut up and went on their device rather than bothering them. Perhaps if families were closer and technology use limited, such issues would not be so wide spread. I should also add that older generations have a huge technology knowledge gap. See this blog post for more information.

In the Lords Debate on 2nd May 2023, Baroness Kidron stated "My Lords, I support the noble Baroness, Lady Ritchie, in her search to make it clear that we do not need to take a proportionate approach to pornography." [....] "In making the case for that, I want to say very briefly that, after the second day of Committee, I received a call from a working barrister who represented 90 young men accused of serious sexual assault. Each was a student and many were in their first year. A large proportion of the incidents had taken place during freshers’ week. She rang to make sure that we understood that, while what each and every one of them had done was indefensible, these men were also victims. As children brought up on porn, they believed that their sexual violence was normal—indeed, they told her that they thought that was what young women enjoyed and wanted. On this issue there is no proportionality."

I want to point out the rather large leap from "We need to stop children accessing harmful content" to "We need to block porn". I said in the Preface that we would be dealing with broad, sweeping legislation and within 2 debates, we are already there; The Baroness gives a cleverly disguised argument because she uses young people discovering sex, perhaps falsely accused of sexual assault as a motive for blocking porn. In the same way that it would be highly unfashionable to be critical of measures to protect children from being prey to sexual exploitation, the Baroness makes speaking out against age restricting porn in the United Kingdom unfashionable too. After all, what reasonable adult wants women to be sexually assaulted because horny, testosterone charged young men consume to much porn? I'm not sure I'd agree that porn is damaging or harmful, personally I've never had the inclination to sexually assault a partner because I'd been watching porn; but maybe that's just me. shrugs

What's interesting is later in the debate, Lord Bethnell suggests "It does not matter whether it is run by a huge company such as MindGeek or out of a shed in London or Romania by a small gang of people. The harm of the content to children is still exactly the same." There has previously been attempts to "block porn" when David Cameron was Prime Minister and then when Theresa May was PM, she brought the "Snoopers Charter"; these blocks obviously failed; but whatsmore there has been a very successful campaign originating from religious, moralistic roots in the US calling itself "The National Center on Sexual Exploitation" pushing for an end to porn. The campaign saw some success after winning a law suit against Pornhub/Mindgeek who were found to be hosting user uploaded child porn. The campaign has since spread to targeting all user-generated porn content, which content creators say damages their income and infringes on their right to make and distribute images of themselves. This fight might be coming to the Online Safety Bill given the scale of platforms being targeted.

Lord Knight of Weymouth continues "In my Second Reading speech I alluded to the problem of the volume of young people accessing pornography on Twitter, and we see the same on Reddit, Discord and a number of other platforms" yet nothing has been said about young people, in particular women, creating and distributing porn on those platforms. Reddit's "r/gonewild" and other associated NSFW subreddits are all user-generated content submitted freely by women (and men, but mostly women) around the world. To add to the vast complexity of the issue, there is even a subreddit called "r/rapefantasies" where women fantasize about getting raped.

This sort of "porn" is not based on the "harmful" commercially driven, silicon-filled mainstream dickdowns on Pornhub. It shows real women, real bodies and sets real expectations even if the fantasies and fetishes are not mainstream; the key in each community is consent, even in the "rape fantasies", consent is a factor. Nevertheless, NCOSE is pushing for Reddit to ban porn. A moderator for r/cumsluts, a 3-million subscriber community for adult content said "If they cause enough fuss in the media, over and over, eventually Reddit will decide it's not financially worthwhile to stand up for sanity, and they'll just nuke porn out of convenience, eventually groups like NCOSE will get porn outlawed from the web in general. It's just a matter of time, and reintroducing the laws several times under different acronyms until people get tired of fighting." (sauce).

Is there really much difference between NCOSE trying to ban porn based on their moral beliefs and the Online Harms Bill? The NCOSE used the same argument - Protect the kids; but their real intent is censorship and control. Thankfully, the Lords are only considering "Age Verification" but even that is a control mechanism implemented on citizens whom should not have to leap through government verification systems in order to consume sexual content.

There's a wider issue on the point of porn. I have yet to find an Commons or Lords contribution about those who make porn as outlined above. The vast majority who do make porn are women so a minority are dictating what millions of other people, mainly women, are allowed to do with their bodies and sexual preferences. It's a huge irony for me because I'm usually very anti-Feminist - at least if we're talking the Charlotte Proudman types - but this time I find myself on the feminist side. It is not up to legislators to dictate when, how or why women can post NSFW content to the internet for their or others pleasure.

Back in the House of Commons, Dame Caroline Dinenage rose to state "[....] The potential threat of online harms is everyday life for most children in the modern world. Before Christmas, I received an email from my son’s school highlighting a TikTok challenge encouraging children to strangle each other until they passed out. This challenge probably did not start on TikTok, and it certainly is not exclusive to the platform, but when my children were born I never envisaged a day when I would have to sit them down and warn them about the potential dangers of allowing someone else to throttle them until they passed out. It is terrifying. Our children need this legislation. [....]"

The Dame adequately displays how those at the top of Maslows Hierarchy of needs cannot fathom how those on lower rungs might consider this entertainment, socialisation or simply be the result of dysfunctional family dynamics. If Dame Dinenage interacted with more working class people, she would understand that there are people who don't have perfect families; often families are broken to the point where role models are sparse and "friends" can be violent yet the yurning for social connection and acceptance is huge. Limiting online content will not stop such behaviour, as she herself admits "it certainly is not exclusive to the platform", it will only serve to hide it; cleansing the internet of the unwashed, poor, neglected and unsightly which society should uplift but does not.

All of these arguments are underwhelming reasons for creating the Online Safety Bill. Shouting "Think of the children" into the void might return fanatical support, but the results will be poor. As with the porn block suggested by David "Pig Fucker" Cameron's Government, technical proficiency of younger people will ensure Ofcom and online platforms are always playing catch up. Back in the early 00's, 15 year old's were hacking NASA. In that particular instance, software controlling the international space station was stolen along with passwords, emails and employee level systems access. More recently, British 15 year old Kane Gamble socially engineered his way through security protocols at American intelligence agencies gaining access to intelligence data on live deployments in Afghanistan and Iraq; going further to gain access to the personal devices of his target.

If these highly secure organisations cannot keep their data secure from teens, why does the government believe that platforms such as Facebook, Twitter, Discord etc will be able to prevent children/teens from viewing "harmful" content? It seems that there is an assumption that younger people need to be protected, when in actual fact they are more tech literate than their elders even if they do not have in-depth knowledge of the protocols and legalities of their actions. More to the point, what makes the government and campaigners think that such platforms won't do the bare minimum and carry on as normal?

Legal, yet harmful

One of my main gripes with the Online Safety Bill is the "Legal yet harmful" definition. Surely, if speech is legal then it doesn't matter if it's "harmful" otherwise we are not living in a free society. Of course there are exceptions, where such speech or content is harmful to another. An example being libel, where if "A" utters some slur or falsehood that demeans my character, that is harmful, but it is not a matter for Facebook, Twitter or even the Police - it's a matter for me to take up in the civil courts if I so wished.

In a written statement in July 2022, the indicative list of "Harmful Content" in Figure B was published. Note that we are now not just dealing with children accessing porn, we are directly interferring with adults lives and creating room to broaden what constitutes "harmful content" later. It's worth mentioning that Online Abuse and Harrassment are already illegal through various other legislation, as is "circulation of real or manufactured intimate images" aka revenge porn, because it's covered by the Domestic Abuse Act 2021. As such, I don't understand why they would need to be included in this specific "Online Safety" bill.

I cannot say I really know the issues around self-harm or eating disorders, so I will not comment however on suicide I personally believe everyone should have the right to choose when they end their lives. In fact, I read this rather interesting article from 2016 about a man who helped others commit suicide and he raises some good points about assisted suicide; so I firmly believe legislators are on the wrong side of that debate. The same could be said for "Harmful health content"; platforms are already addressing this by providing context or flagging sources; but ultimately people will only believe what they want to believe. The paradox is that the more legislators try to force a narrative or "one single source of truth" the more it will create conspiracy theories.

During the 16th May 2023 Lords Debate, Baroness Newlove raised the point that 40% of children did not report "harmful content" [to platforms] as they felt there was "no point in doing so" going further to suggest that there was a need to “provide an impartial out-of-court procedure for the resolution of any dispute between a person using the service and the provider”. This again raises the question if the content is legal why does the government think it has the right to impose any regulation over it when it should be dealt with either by the platform or in the civil courts?

Lord Stevenson of Balmacara pointed out that Government ministers had already stated in Feburary that “Ombudsman services in other sectors are expensive, often underused and primarily relate to complaints which result in financial compensation. We find it difficult to envisage how an ombudsman service could function in this area, where user complaints are likely to be complex and, in many cases, do not have the impetus of financial compensation behind them”. These are very persuasive arguments. Why would I go to an Ombudsman who has about as much authority as a chihuahua, instead of going directly to court? That's my preference of course - but I should imagine an Ombudsman will be inundated with claims should it be established. I can already envisage council estate drama queens and village green preservation society types laying claim against anything and everything that offends them. How dull the internet will become once that happens.

That brings me to the most intriguing point. The ombudsman will supposedly be paid for by a levy on regulated companies - so we're going to tax companies, whilst having them undermine encryption, sell out their customers data and privacy and force them to give compensation to all and sundry who complain about content on their platforms as well? You can see why businesses might not want to operate in the UK when "legal yet harmful" means "legal yet costly". The other side to that coin is that very, very wealthy platforms might just.. corrupt the ombudsman?

I wonder what sort of content legislators think IS acceptable? For instance, what about:-

Figure C - The Curious Case of Dalia Dippolito

Figure C is an in-depth look at a domestic abuse case featuring a woman who tries to hire a hitman to kill her husband to inherit his wealth. I'd argue it's upsetting purely by the nature - human nature, psychology and criminality; but arguably also because it's shows that women are not the frail, fragile little goddesses that they are made out to be. It shows that they can be psychopathic abusers.

Or how about:-

Figure D - Pimp My Reich

Figure D, An intentionally satirical and offensive "Pimp My Reich" video. Offensive for quite obvious reasons, no words needed but equally, I'm not sure I'd want it censored as I see the funny side of laughing at the stupidity of Nazis.

If one thing is for sure, it's that "Legal yet harmful" content is an area where legislators are really overreaching their authority. In my view, society and the internet will be much worse off for it despite what might be, in their own view, best attempts, to help the most vulnerable in society gain a voice - it should not come at the expense of everyone else.

Global legislative efforts

What I find fascinating is that there are similar legislative efforts to the Online Harms Bill across the globe. I'm sure it's a result of coincidence but one could be forgiven for arriving at the conclusion that these legislative efforts are to aid espionage, surveillance and information control efforts given how broadly they undermine fundamental rights of population alongside technological absolutes. It is also no surprise to me that such legislative efforts are being made across all Five Eyes nations and their allies. I also find it particularly interesting that UK Police Forces are buying up GrayShift GrayKey hacking devices at the same time as the government are rolling out bills to undermine encryption and privacy.

In America, the Senate has been trying to ram through variations of the COPPA and EARN IT acts. COPPA, a bill which has been introduced 3 times, using the "protect the kids" argument would force platforms not to track children's personally identifiable information. On the face of it, this sounds like a good idea however dig a little deeper and COPPA tightens the grip of government on online platforms by adding a regulator - much like Ofcom in the OSB - enabling the U.S government to roll out definitions for "harmful" content. Similarly, EARN IT exposes "platforms to civil lawsuits and state-level criminal charges for the child sexual abuse material (CSAM) posted by their users" (sauce).

As I pointed out previously in respect to the Baroness and her porn/sexual assault argument, no reasonable adult wants to be seen questioning a law that wants to eradicate child abuse - especially child sex abuse - but EARN IT, returning to Congress for the third time, having first been introduced in 2020, is horrifically insidious as it seeks to undermine end-to-end encryption, not eradicate child sex abuse. Legislators are banking on most people not reading past "child sex abuse" but in order to comply with the legislation, apps such as Facebook, Whatsapp, Instagram, iMessage, Telegram, Hotmail, Outlook, Windows, Discord, OneNote, OneDrive etc would be forced to scan the contents of a users uploads or messages before they were encrypted "end-to-end". Think about that for a second. If your messages and uploads are being scanned, what is the point of encryption? Do you truly believe pedos are going to simply throw their arms in the air and go "Oh dear! That's us done. I can't diddle little kids anymore, big brother is watching!" Of course they won't. They'll move to decentralised platforms, create their own encrypted platforms or simply move offline leaving the general public being surveilled - as would the Online Safety Bill.

Similar legislation has been proposed or gained royal assent in -

Australia - "The Online Safety Act 2021" , which also uses the "Think of the children" argument.

New Zealand - "The Films, Videos and Publications Classification (Urgent Interim Classification of Publications and Prevention of Online Harm) Amendment Bill 2020". Interestingly, this legislation used the Christchurch terror attack, a live streamed terror attack as justification for censoring content.

Canada - "Online Harms Bill" created because of the "Governments commitment to address online safety" though it ultimately failed for precisely the reasons I've outlined above. Much of the reasoning for this bill seems to have been "hate speech".

Of course, Five Eyes aren't the only alliances proposing "Online Harms" legislation, The EU also wants to scan private messages for child abuse - in reality this means billions of people will no longer be able to have private conversations. Here the EU discusses the need to regulate online platforms, going as far as suggesting their guidelines are "not enough" blaming "big tech" for political and social ills, fuelling extremist politics. There of course is the crux of the issue; they do not like the political opinions of the vast swath of the population and seek to control the narrative. It has never been about "harmful content" as championed by children's groups or feminists or other groups; it's about getting crowds worshipping the trojan horse.

Conclusion

Concluding, I think it is very relevant to refer back to my point in the preface about Priest Holes. What were once gleaming bastions of freedom and democracy are turning, albeit slowly, into police states with repeated attempts to force through legislation that outwardly seems friendly but has dire consequences for the rest of society; this will inevitably result in digital priest holes. Inventive ways to circumvent the access controls, tracking, data access etc; but also mass adoption of decentralised platforms which will make the regulators, ombudsman and legislation discussed here irrelevant. A popular and recent example, albeit not driven by legislation is the migration from centralised Twitter to decentralised Mastodon. Sure, it was Musk and his silly behaviour which drove that migration but it's the same principle.

This trojan horse legislation is being celebrated by those who do not know better, neither from a technical perspective or a results basis; I understand. They want to do good for the less able, the vulnerable and the down trodden. It's a noble effort but they are being used because they themselves are gullible enough to be gobbled up by the "greater good" narratives or in some cases, are just bitter that others find offensive material comical. These are not sound reasons to make legislation of this kind. Furthermore, I find the push towards censoring porn to be very tiresome. It reminds me of the recycled debate about sexual liberation being a very bad thing for society. That ship has already sailed and the majority want porn, the majority enjoy casual sex and the majority don't care very much for religious, moralistic dogma.

The Online Safety Bill is not indicative of a healthy democracy or a country with freedom of speech/expression. It is indicative of suppression, such as that of Russia and China. The fact that legislators want to create censorship on certain types of legal content for adults is highly concerning; but whatsmore they want to create mechanisms which allows complaints to be made outside of a court system where proof would be required of harm thus giving privilege to the aggrieved party. This creates bias in much the same way Chinese "courts" might be bias against dissenters.

I do acknowledge that the internet has harmful content that it is distressing for children to come across some of the more gory and sadistic content; but I do not believe the OSB adequately solves this problem. As I previously pointed out that there is child porn on Mastodon instances. Such cases show a lack of technical knowledge, or bad advice by experts and campaigners; Legislators should know that simply regulating "online platforms" will do nothing to stem the flow of "harmful content" which is then found by children. Who is the Ombudsman going to contact? How will they be held accountable? What if they're not British? In my view, legislators should be pointing fingers squarely at parents, not seeking to enhance their own powers whilst screeching "Won't someone think of the children!" - parents are an easier target, they are already bound by Parental Responsibility to ensure the welfare of their children. Teach parents how to setup filters, parental controls, block certain content and websites.

I'm also uneasy about where the legislation might lead once implemented. "Online Harm" is a very broad term. It's not unforeseeable that legislators might move from "Facebook" to "Full on Operating System Invasion" after all, most operating systems use lots of online services and stay constantly connected; if you are suspected of accessing or creating harmful material, can you expect to have your OS infiltrated/monitored? How about being tracked if you are suspected of being suicidal? Perhaps, we'll see the emergence of Online Behaviour Corrections Officers who will re-educate badly behaved netizens.

One thing is for sure, the Online Safety Bill is not good for the United Kingdom or wider diversity of thought, speech, expression and creativity overall.